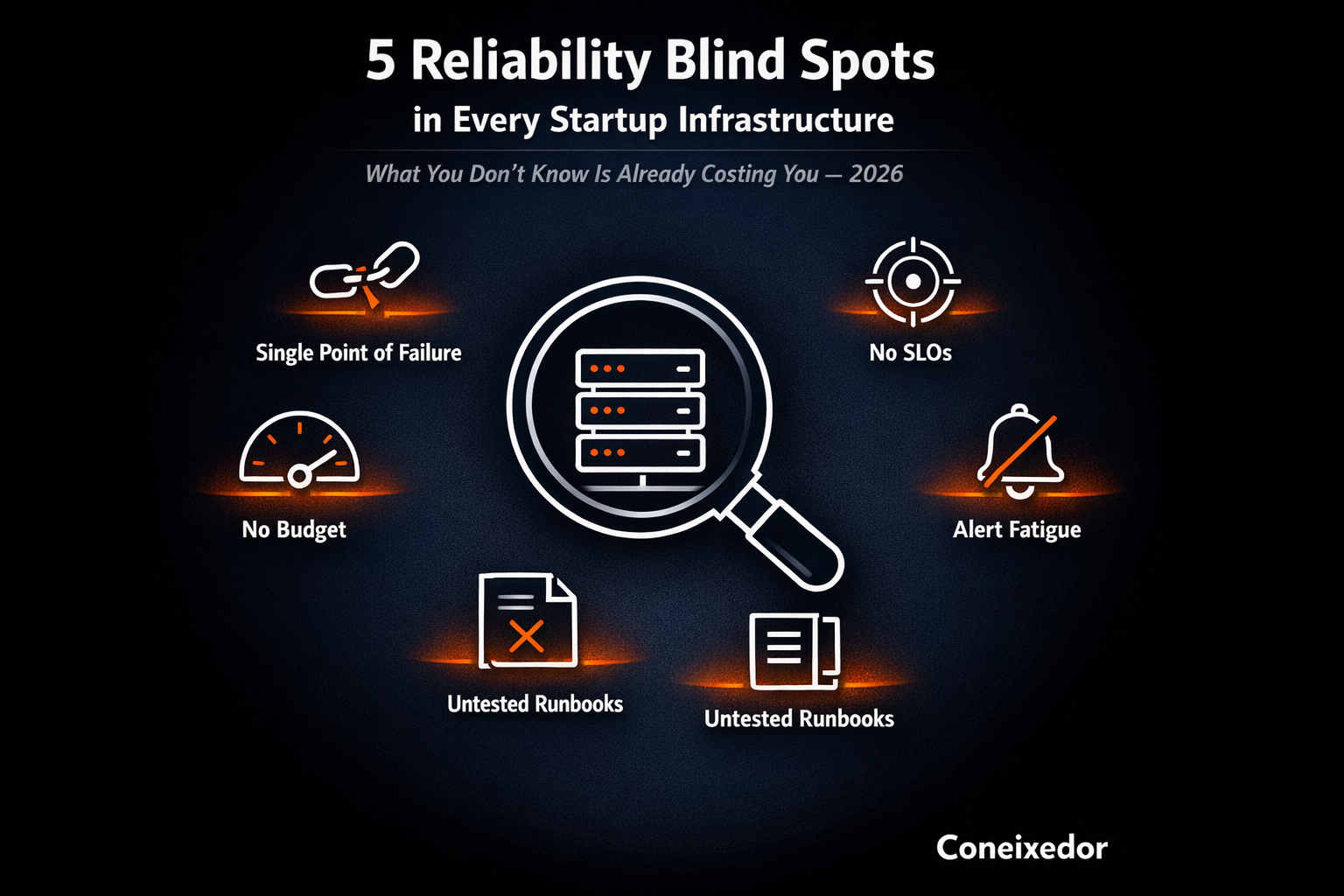

After auditing dozens of startup infrastructure setups, the same 5 reliability gaps appear almost every time. Here is what they are, why they happen, and exactly how to fix each one.

I run a free infrastructure audit every week. Fifteen minutes, five questions. The same five blind spots show up almost every time, not because the teams are bad engineers, but because nobody told them what to look for.

Blind spot 1: No SLOs

Teams have uptime metrics but no user-facing reliability commitments. This means nobody can answer the question: are we reliable right now?

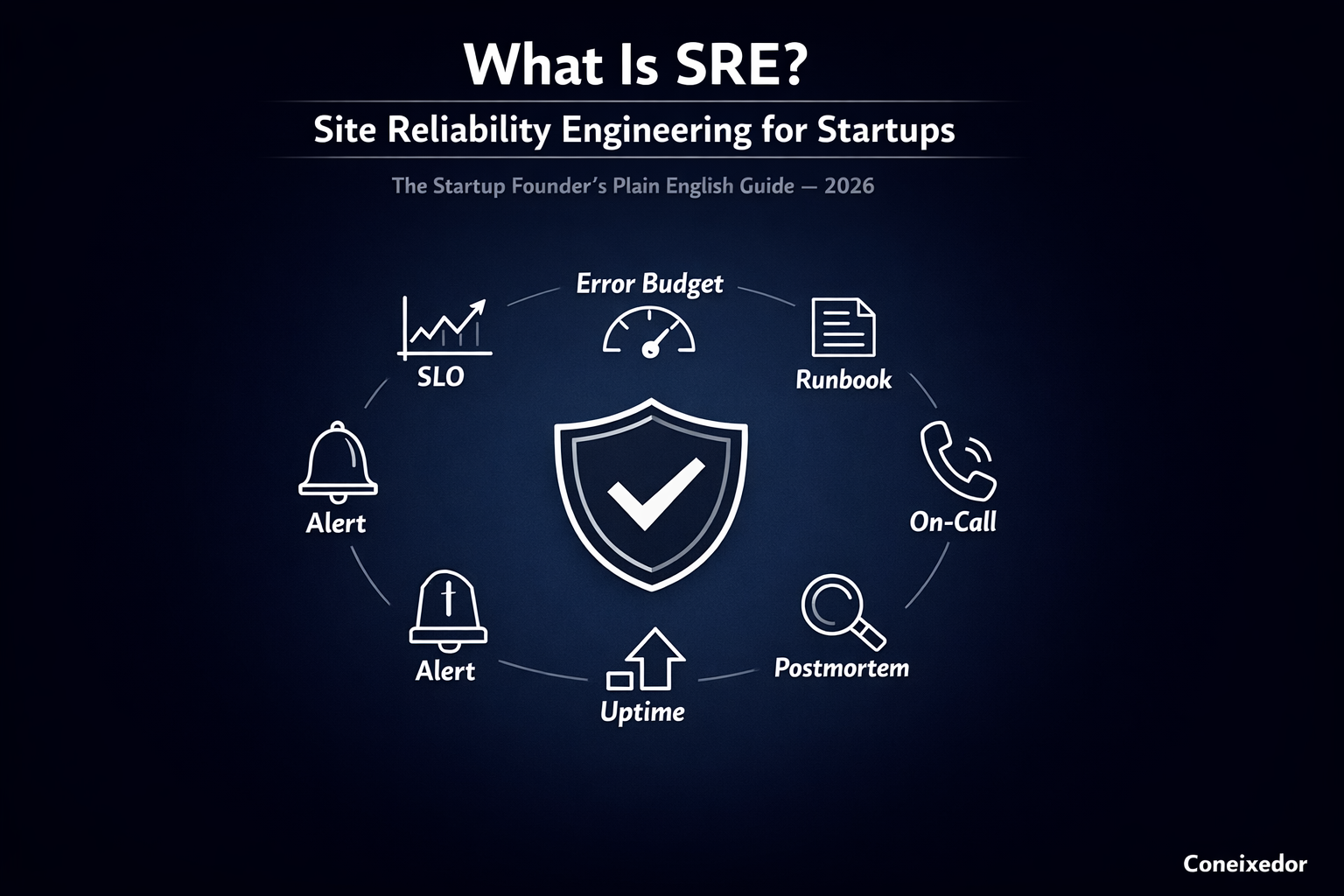

An SLO (Service Level Objective) is a specific, measurable promise your team makes about what your system delivers to users. Uptime metrics tell you your servers are running. SLOs tell you whether users are getting what you promised. Those are two very different questions.

Defining your first SLO is simpler than most teams expect. Pick the three most critical user journeys, choose a metric for each (availability, latency, or error rate), and agree on a realistic threshold. A practical starting point: 99.5% of checkout requests will succeed within 2 seconds over a rolling 30-day window. That sentence is worth more than any dashboard.

Once your team has a number to point at, reliability stops being a vague anxiety and starts being a managed resource. Reliability conversations shift from "I think we are fine" to "we are at 99.7% against a 99.5% target." That shift is the foundation everything else in this list depends on.

Blind spot 2: No runbooks

When an incident happens, every engineer starts from scratch. Resolution time doubles. Stress triples. The 3am test reveals this gap instantly.

A runbook is a documented step-by-step procedure for handling a specific failure scenario, written for someone who has never seen this failure before. The 3am test is simple: could your least experienced on-call engineer resolve this incident in 20 minutes using only this document? If not, the runbook is not done.

Every useful runbook contains exactly five fields: trigger conditions (how do you know this runbook applies), first steps (the first three actions to take, mitigation before diagnosis), escalation path (who to call if 15 minutes pass without resolution), rollback procedure (how to get back to the last known good state), and a postmortem template link. Anything more and nobody reads it under pressure.

The most common mistake is writing runbooks after the incident, from memory, at 4am. By then the details are wrong and the gaps are invisible. The process that works: write runbooks in calm moments before incidents happen, then update them after every incident. After, not instead of.

Blind spot 3: No error budget

Without an error budget, every feature-vs-reliability tradeoff is decided by gut feel and politics instead of data.

An error budget is the inverse of your SLO. It is the specific amount of unreliability your system is allowed. If you promise 99.9% uptime, your error budget is 0.1%, which equals 8.7 hours per year or 43.8 minutes per month. That is not a penalty. It is a resource your team can spend deliberately.

Once you define an SLO, you get the error budget for free. The math is automatic. What is not automatic is agreeing on the policy: what happens when the budget runs low. The most common policy is straightforward. When the error budget is more than 50% spent for the month, no risky feature deployments. When it is fully spent, the team focuses on reliability until the budget resets.

This makes every reliability conversation data-driven instead of political. The error budget makes the decision. Product and engineering stop arguing and start looking at the same number.

Blind spot 4: Alerts without owners

Monitoring exists. Alerts fire. But the alert goes to a shared Slack channel and nobody owns it. Every unowned alert is a future incident waiting to happen.

The shared Slack channel problem is specific. When an alert fires into a channel of twelve engineers, each one assumes someone else is handling it. The result: five minutes of silence, then three engineers start debugging the same thing in parallel, and resolution takes twice as long as it should. This is not a people problem. It is a systems problem with a direct fix.

Assigning ownership to every alert takes one morning. Go through your alerting configuration and assign a named person (not a team, a person) to every alert. That person does not have to resolve every alert. They have to acknowledge it, determine severity, and either fix it or escalate it. The difference between a team owner and a named person is the difference between unclear and clear.

The rule is simple: if an alert has no named owner, it is not a real alert. Either assign it or delete it. Unowned alerts that fire persistently teach engineers to ignore all alerts, which is worse than having no monitoring at all.

Blind spot 5: Known single points of failure nobody has time to fix

Every team has them. Everyone knows. The ticket sits at the bottom of the backlog. The fix is scheduling one reliability sprint per quarter.

Single points of failure stay unfixed even when the whole team knows about them for a specific reason: they compete directly with feature work for engineering time, and they never win. The Jira ticket with "database failover" sits at priority 2 for eight months while the product roadmap ships. Then the database goes down and it becomes the only thing that matters for the next three hours.

The reliability sprint model treats reliability work like a product sprint. One week per quarter, the entire engineering team works only on reliability improvements. No feature work, no exceptions. The backlog is ranked by blast radius: which single point of failure, if it fails today, causes the most damage? That one goes first. The model works because it makes reliability work scheduled and expected instead of reactive and endless.

Prioritising which single points of failure to fix first is a one-variable decision. Rank every known failure point by the answer to this question: if this breaks during a customer demo, a funding call, or a peak traffic moment, what is the cost? The highest-cost items go first, regardless of engineering complexity.

When all five blind spots are closed, the feeling in the engineering team changes. Deployments stop feeling dangerous. On-call shifts stop being dreaded. The team knows what is running, who owns it, and what to do when something breaks. That calm is what a well-built infrastructure feels like.

If you want to know which of these five blind spots exist in your infrastructure before they become incidents, a free audit at coneixedor.com will surface them in fifteen minutes.

Frequently Asked Questions

A structured review of your cloud environment, deployment processes, monitoring setup, and reliability practices to find gaps before they cause incidents.

An SLO (Service Level Objective) is a specific measurable reliability target for a user-facing system. It turns reliability from a vague goal into a number your team can manage.

At minimum: SLO definitions, runbook coverage, alert ownership, error budget policy, known single points of failure, and incident response process.

An error budget is the inverse of your SLO. If you promise 99.9% uptime, your error budget is 0.1%, or 8.7 hours per year of acceptable downtime.